The AI Customer Service Trap: Why Automation Is Killing Your Retention (And How to Fix It)

The promise of AI customer service is a hot topic in the modern boardroom. Automated customer service, when deployed effectively, seems to offer a seductive vision of zero wait times and marginal costs driven down to zero. For CFOs focused on the bottom line, it appears to be the perfect efficiency engine that will strip away the messy, expensive reality of human labor. But for the customer staring at a screen, this "efficiency" often manifests as a digital wall designed to keep them out. The reality of 2025 is that businesses are not automating customer service; they are automating customer frustration.

This efficiency Illusion is the dangerous gap between what customer support dashboards say ("Tickets Deflected") and what customers feel (trapped). While AI excels at the easy 80% of transactional queries, it fails catastrophically at the critical 20% of high-stakes interactions that determine long-term loyalty. By replacing human empathy with algorithmic loops, companies are marginally saving on support tickets while bleeding dollars in silent churn. An AI-first model is a financial and operational trap often mistaken for optimization. The only sustainable path forward for SMBs is a hybrid architecture that puts humans back in the loop.

The Efficiency Illusion: Why "Ticket Deflection" Is a Vanity Metric

The modern boardroom is fixated on 'Ticket Deflection', the percentage of inquiries handled without a human agent. However, when silence is mistaken for satisfaction, this metric becomes a dangerous blind spot that masks a deepening retention crisis.

The "Deflection" Lie: Why High Ticket Deflection Often Means High Silent Churn

Some corporate boardrooms are obsessed with Ticket Deflection as the ultimate measure of success of their customer support. Ticket Deflection, charitably described as "empowering" customers to solve their own issues through self-service channels, means less interaction with customer support services. As such, businesses treat every un-submitted ticket as a victory. However, this measure is fundamentally flawed because it equates silence with satisfaction. In a service economy, this assumption is a dangerous leap in logic because by prioritizing the reduction of contact volume, executives are effectively incentivizing their teams to build walls between the brand and the customer. The result is a dashboard that looks green because costs are down, while the actual customer relationship is silently rotting from the inside.

Focusing on deflection ignores the reality of silent churn, where the customer simply vanishes without ever lodging a formal complaint. Research indicates that 56% of unhappy customers rarely complain; they simply leave without giving businesses the chance to fix the issue. When a bot fails to resolve a problem and offers no clear path to a human, the system records a "success" (no ticket created), while the business suffers a massive failure (lost Lifetime Value). This invisible attrition is the silent killer of SaaS, other online businesses, and subscription models, creating a scenario where support costs drop, but revenue bleeds out through the back door.

The "Loop of Doom": Why Circular Logic Triggers "Rage Clicking"

Customers have a visceral, emotional reaction to being trapped by a bot that cannot understand context or nuance. According to qualitative analysis of user sentiment, customers frequently describe interaction with bots as a "prison" or a "loop" in which the AI repeats the same unhelpful answers regardless of the input. This is not a passive annoyance; it is an active barrier that tells the customer their time is worthless compared to the company's efficiency goals. The brand promise of "we care about you" is instantly shattered by the mechanical indifference of a loop that refuses to acknowledge its own failure.

That frustration then manifests physically in "Rage Clicking" — rapid, repetitive clicking on unresponsive elements in a desperate attempt to break the system. Furthermore, recent research shows a staggering 667% year-over-year increase in rage clicks on mobile interfaces in 2025, and this is directly correlated with AI deployment. This digital body language is a screaming red flag that the "seamless" AI experience is actually a high-friction assault on the user. When support channels generate physical aggression in a customer base, businesses have not solved a problem; they have created a brand crisis.

The "Happy Path" Fallacy: Why AI Fails When Things Go Wrong

It is important to concede that AI is an exceptional tool for the "Happy Path", which are binary, predictable transactions like tracking an order, resetting a password, or checking a balance. In these low-stakes scenarios, customers actually prefer the speed of a bot because the primary variable is velocity, not empathy. The technology works perfectly when the customer's problem fits neatly into the pre-programmed decision tree. In these specific, narrow contexts, automation is a genuine value-add that reduces friction for both the user and the business.

The trap occurs when companies force AI to handle the "Unhappy Path", which includes complex billing disputes, service failures, or nuanced technical issues. In these moments, the customer is already anxious or annoyed, and they require discretionary judgment that a Large Language Model (LLM) simply cannot provide. When the AI fails to understand the context or worse, offers a generic apology, it exacerbates the friction, turning a minor issue into a reason to churn. The failure here is not the technology itself, but the strategic decision to deploy it in high-stakes scenarios where human judgment is the only acceptable interface.

The Mobile Friction Gap: Why Automated Support Breaks on Small Screens

The friction of AI support is amplified on mobile devices, where screen real estate is limited and typing can be cumbersome. A chatbot interface that requires lengthy typing, selecting from tiny dropdowns, or navigating complex menus on a smartphone creates a significantly higher cognitive load than a simple phone call or email. This interface failure ignores the context of the mobile user, who is often on the go and seeking a quick resolution, not a text-based negotiation with a robot. The result is a user experience that feels punishing rather than helpful.

This friction gap effectively locks out mobile-first customers from receiving support, driving them to competitors with lower-friction channels. Reports indicate that mobile error clicks and frustration signals are rising sharply as companies force desktop-centric AI solutions onto mobile users. By ignoring the physical limitations of the mobile form factor, businesses are creating a "support wall" that is functionally impassable for a large segment of their audience. This is a user experience failure that directly impacts retention rates, as frustrated mobile users are the quickest to abandon the session and the service.

The Empathy Deficit: Why AI Cannot Save a High-Stakes Account

Operational friction annoys customers, but emotional failure drives them away. While AI can process data at lightning speed, it lacks the genuine empathy required to salvage high-value relationships during a crisis.

The "Uncanny Valley": Why Automated Apologies Insult High-Value Clients

The "Service Recovery Paradox" posits that a customer who experiences a failure but receives an excellent recovery can become more loyal than one who never had a problem. However, this paradox relies entirely on the authenticity of the recovery, which is why AI fails completely in this domain. When a chatbot apologizes, it is perceived as cheap talk because the user knows the algorithm feels no regret and carries no authority to make things right. The apology lands in the "uncanny valley" — close enough to human speech to be recognizable, but artificial enough to be deeply unsettling and insincere.

For a high-value client paying thousands of dollars a year, being fed a scripted apology by an algorithm feels like a calculated insult. It signals that the vendor does not value the relationship enough to assign a human to save it. For a frustrated consumer, that cements the decision to leave. An AI cannot offer discretionary compensation, it cannot read the emotional weight of the situation, and it cannot say "I understand" with any credibility. By automating the apology, companies are effectively automating the burnout of their most valuable relationships.

The Revenue Gap: Why AI Customer Service Fails to Upsell High-Value Clients

Many may view customer support as a cost center; however, it is a hidden revenue engine that founders could easily ignore in their quest for efficiency. Human agents play offense, identifying strategic opportunities to upsell or cross-sell during a resolution based on the customer's tone and stated needs. Data shows that human-led teams achieve 76% higher win rates and 70% larger deal sizes because they can read emotional cues and identify the "why" behind a purchase. A skilled human agent knows that a support ticket about storage limits is actually a buying signal for an Enterprise plan.

An AI might solve the immediate technical problem, but it will miss the cues that a client is scaling and ready for an upgrade. The bot is programmed to close the ticket as fast as possible (defense), whereas a human is trained to expand the account (offense). By automating the interaction, companies are saving pennies on the ticket cost but losing thousands in potential Account Expansion revenue. This revenue gap means that an AI-only support model is often net-negative for the business, even if the operational costs appear lower in the financial statements.

Shadow NPS: The Toxic Brand Sentiment Unrecorded Surveys

Corporate dashboards often show green customer satisfaction (CSAT) scores because they measure the transaction, not the relationship. However, "Shadow NPS" — unsolicited sentiment on platforms like Reddit and X — tells a different, darker story. Users frequently vent about gaslighting bots and the inability to reach a human, describing the experience as "punishing" good customers. This data remains invisible to the executive team because these users rarely fill out the post-interaction survey; they simply vent to the market.

That toxic sentiment is invisible to the company but highly visible to prospective customers researching the brand. A divergence between internal metrics (which look fine) and external reputation (which is burning) is a leading indicator of brand erosion. While the company celebrates its "efficiency," the market is slowly defining the brand as "impossible to deal with." This reputational damage is long-lasting and difficult to reverse, often costing far more in Customer Acquisition Cost (CAC) than the AI system ever saved in wages.

"Agent-Seeking Behavior": The Desperate Search for a Human

The ultimate proof of AI failure is the lengths to which customers will go to bypass it. "Agent-seeking behavior" has become a dominant user pattern, with 62% of customers stating they would prefer to "hand out parking tickets" than navigate an automated phone tree. Users are now trained to scream "AGENT" at voice prompts or mash the "0" key, treating the support system as an obstacle course rather than a service. When the primary goal of your user is to escape your system, the system has fundamentally failed its purpose.

When the default for customers interacting with a business becomes avoiding the first point of interaction, that creates a friction that tells the customer their time is less valuable than your support budget. This is a fatal message in a competitive market. When a customer has to fight to speak to a representative, they are internalizing the message that the company does not care about them. In a landscape where switching costs are low, 53% of customers will switch providers after a bad experience. The barrier you built to save money is actually a filter that is removing your most impatient and often most valuable customers from your books.

The "Human Premium": Why the Future of Support Is Hybrid

The solution is not to abandon technology, but to stop using it as a wall. The most resilient companies are adopting a 'Hybrid Equilibrium' — a strategic architecture that leverages AI for speed and human experts for trust.

The "Strategic Gating" Model: Using AI for Triage, Humans for Trust

AI remains a useful tool that will continue to improve, but to stop using it as a wall and start using it as a sieve. The most successful companies are adopting a "Hybrid Equilibrium" or "Strategic Gating" model, where AI handles the noise and humans handle the value. In this architecture, AI acts as Gate 1 (The Concierge) to handle the transactional volume, such as password resets, tracking, and FAQs. This clears the queue of low-value noise, allowing the human team to focus entirely on retention and resolution.

Crucially, high-emotion, complex, or high-lifetime-value interactions are immediately routed to Gate 2 (The Expert), a human agent. This approach stops the loop of doom by offering an immediate off-ramp to a human when the AI detects frustration or complexity. By gating the experience based on value and complexity, businesses can leverage the speed of automation without sacrificing the retention power of human empathy. This is not about eliminating costs; it is about optimizing connection to ensure the highest ROI on every interaction.

Willingness to Pay: Why Human Customer Support Is the New Luxury Differentiator

In an era of "Greedflation," where, in the context of customer support means competitors are raising prices while automating interactions, human connection is becoming a luxury good. Data confirms that 72% of consumers are willing to pay more for a premium experience that guarantees a human connection. As the market floods with cheap, automated service layers, the "No Bots" promise becomes a powerful signal of quality and respect.

Companies can monetize this demand by positioning "100% Human Support" or "Human-in-the-Loop" as a premium differentiator. Instead of hiding your humans, feature them as a core part of your value proposition. This signals to the market that you are a premium partner, not a budget utility. In a B2B context, this can be the deciding factor for an enterprise buyer who refuses to risk their operations on a chatbot.

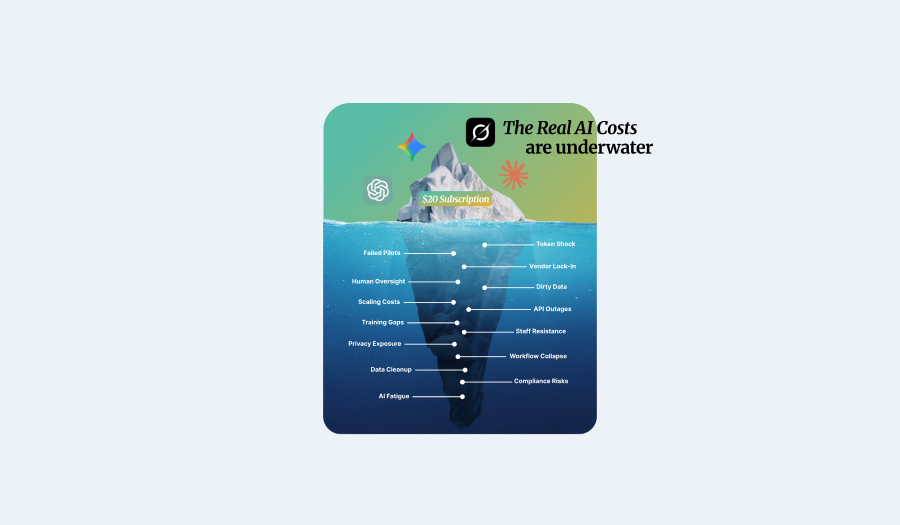

The Cost of Humanity: Why Local Hiring Cannot Solve the AI Crisis

The dilemma for most businesses is economic: they know they need humans to solve the churn problem, but they cannot afford to hire locally. A US-based support agent costs approximately $35k-$40k annually, driving the cost per contact to ~$6.00 compared to $0.50 for AI. For a scaling company, hiring a full domestic team to replace the bots is often a profit-killing impossibility that would destroy the unit economics of the business.

Furthermore, the US market suffers from a skills scarcity in support roles, which are often viewed as temporary gig work rather than a career. If your customer support has high turnover and instability, even if you pay the premium for local staff, you often receive a lower quality of service. The challenge for the modern founder is finding a way to access human talent without wrecking the margins that made AI attractive in the first place. The answer lies in decoupling "human" from "local."

"English-Tested" Offshore Customer Support: The AbroadWorks Quality Standard

The solution to the economic bind is global talent, but only if it meets a rigorous quality standard. The fear of "bad outsourcing" — scripted, robotic call centers — is just as unfounded as the fear of AI. AbroadWorks solves this by focusing on "English-Tested" empathy, ensuring that agents are not just literate but linguistically nuanced. You do not just need a warm body in a seat; you need a fluent expert who can navigate the "empathy gap" that AI leaves behind.

By sourcing the top 1% of global customer service talent, businesses can access the human layer at a cost structure that makes the hybrid model viable. AbroadWorks allows you to deploy human agents for a fraction of the cost of local hires, effectively neutralizing the price advantage of AI. With AbroadWorks, "affordable" does not mean "low quality"; it means high-skill talent deployed efficiently. This removes the financial barrier to human support, allowing you to compete on service quality without sacrificing profitability.

Stability as Strategy: Why Offshore Support Agents Outperform Local Gig Workers

Finally, consider that service quality, when dealing with customer queries, is a function of retention and institutional knowledge. In the US, call center turnover rates hover between 30%-45% annually, driven by the perception of support as a stop-gap job. This constant churn means your customers are always talking to a rookie who does not know your product. In contrast, top-tier offshore roles are viewed as desirable careers, leading to significantly higher retention and stability.

A stable, long-term human agent builds deep institutional knowledge that a transient local hire never will. By building a dedicated global team, your business is investing in a workforce that stays, learns, and builds lasting relationships with your customers, not just cutting costs. Stability converts your support team from a revolving door into a strategic asset. The result is a support function that gets smarter over time, delivering the high-touch experience that retains customers and drives expansion.

Frequently Asked Questions (FAQ)

- Does AI actually save money in customer service? While AI reduces the cost per ticket (often to ~$0.50), it can increase the total cost by driving "Silent Churn." If automated friction causes high-value customers to leave, the revenue loss from churn often exceeds the operational savings from the bot.

- What is "Shadow NPS"? Shadow NPS refers to unsolicited customer sentiment found on social platforms like Reddit and X. Unlike corporate surveys (CSAT), which often look positive because they measure completed interactions, Shadow NPS reveals the raw, unfiltered frustration of users trapped in AI loops.

- Can AI chatbots create legal liability? Yes. The ruling in Moffatt v. Air Canada established that companies can be held liable for "hallucinations" or false promises made by their chatbots. This effectively kills the "black box" legal defense, meaning companies are responsible for their AI's errors.

4. What is the "Hybrid Model" of support? The Hybrid Model uses AI for "Gate 1" interactions (simple, transactional queries) and immediately routes complex or high-emotion queries to "Gate 2" human agents. This ensures efficiency without sacrificing the empathy required for retention.

Conclusion: Stop the Loop, Start the Conversation

The "AI Customer Service Trap" is built on the false belief that efficiency equals effectiveness. It does not. While AI can process data at lightning speed, it cannot build trust, and in the subscription economy, trust is the only currency that matters. The hidden costs of "Silent Churn," legal liability, and brand erosion far outweigh the pennies saved on a deflected ticket.

The smart strategic play is not to choose between "cheap bots" and "expensive locals," but to deploy a managed, hybrid architecture. By using AbroadWorks to put humans back in the loop, you turn your customer support function from a frustration engine back into a retention powerhouse. It is time to break the loop and start the conversation because your customers are waiting to talk to you, and if they cannot, they will talk to your competitor.